Displaying items by tag: ibm

Introduction

The history is an important part of the requirements management tool and the purpose of this article is to explain functions and capabilities of DOORS Next for history maintenance.

The Challenges to Managing History in DOORS Next Generation

On the very basic level history of requirements management items in DOORS Next is organized on artifacts level and is presented as revisions and audit history. This information is accessible for artifacts via ‘Open history’ action in the menu. The first tab you see when you open history is ‘Revisions’, it is splitted on ‘Today’, ‘Yesterday’, ‘Past week’, ‘Past month’ and ‘Earlier’ sections which include different versions of an artifact and baselines of project area or component. When you switch to ‘Audit history’ tab you see only versions of a current artifact with explanation of actions performed on it and changes which were created with information on date and time and author of it. Revisions can be restored to the current state (except revisions which were created with linking operations).

Another source of history of an artifact are system attributes which preserve information on date and time of creation and modification of an artifact and a user who created and modified an artifact. These attributes are updated by the application on creation of an artifact (Created On for date and time of creation and Created By for username) and Modified On and Modified by for the latest modification. Additionally, if you use ReqIF import to add artifacts, attributes with prefix ‘Foreign’ will show you related information from the source.

Of course, a revision list and attributes of a single artifact is not enough to manage requirements history, so versions of artifacts are aggregated to baselines. The first kind of baselines is a DOORS Next baseline, in other words it can be explained as a snapshot which includes certain revisions of artifacts. Baselines are created to preserve some agreed state of requirements.

When we are talking about artifacts revisions It is meaningful to mention that module artifacts revisions are specific - they are created when module structure is changed (set and / or order of included artifacts) or attributes of module itself. So when you edit an artifact in module context without changing position of this artifact in module - you do not create new revision of module automatically. To capture the state of a module you need to create a DOORS Next baseline.

DOORS Next with configuration management capabilities has more options to manage history of requirements. First of all - streams, which allow you to have parallel timelines for different variants of requirements. All requirements in DOORS Next have their initial stream, which is the default timeline for requirements changes. DOORS Next has an option to create a parallel timeline using additional streams, which is mostly used to manage variants of requirements.In this case usually some existing version of requirements is used as an initial state for a new stream. And changes of artifacts in a new stream will not affect the initial stream - revisions of artifacts created in a new stream are visible only in this stream unless the user initiates synchronization. During synchronization the user has options with merging approaches, and one of them is using changesets, which is explained below.

Changesets are another kind of data set in DOORS Next which can be observed in the audit history. There are two types of changesets - user created changeset (name of changeset in this case is specified by user) and internal changeset, which is a reference for changes in the audit history. Internal changesets can be found in the audit history and can be also used as an option to deliver changes across streams. Created by user changesets aggregate small changesets automatically created by DOORS Next, which can be found in audit history and in the merging menu when you deliver changes from one stream to another. Both types of changesets can be found on stream’s page, and if a stream is allowed to be edited only via changesets - it means, users are forced to create a changeset to edit requirements in a stream - a list of changesets of a stream gives you a good representation of history for a stream.

Another functionality to manage history is Global Configuration baseline. When you enable DOORS Next project area for configuration management, links between requirements in different components are created via Global Configuration stream - you need to switch to Global Configuration context to create a link across DOORS Next components and also to see such links. As each component is baselined independently in DOORS Next, in order to preserve cross-component linkage state you need to create a Global Configuration baseline. When you perform this action, baselines are created on each component level automatically and included to a Global Configuration baseline. Switching to this baseline in the future will show you the exact state of linking at the moment of global baseline creation - proper links between proper revisions of artifacts.

Specialized solutions, Approaches, Tips

- As mentioned above, baselines are created on component level (or project area level if project area is not enabled for configuration management). When number of baselines grows, some maintenance of baselines is required - to shorten the list of baselines, some of them need to be archived

- To help users with navigation in list of baselines we provide special widget, which filters from the flat baseline list those baselines which were created in a certain module context

Introduction

Deleting information from any document is something to think about twice may be thrice. But deleting a requirement from a specification is not as simple as deleting a sentence. A requirement is an object which holds not only the specification sentence but other information like name-value pair attributes, history, link information etc.

In this document we will try to explain possible ways to approach how to delete requirements

Challenges

Sometimes it is required to discard some information from the project for various reasons. But even in such cases, when specification information is discarded, there are still some risks as follows:

- It may break the integrity of the overall information map.

- The information piece to be discarded may not be needed in one configuration but may still be critical in others.

- The information piece to be discarded now, may still be an important component of an audit trail

- The information itself may have no value anymore, but the links it bears, my still constitute some value

So when we decide to delete any information from the requirements base, we should be considering several cases. Or somebody has to pre-consider for us.

How to delete requirements from DOORS Next Generation

How can we delete any requirement from DNG? Or what is the best approach to delete requirements from the requirements database?

For the reasons mentioned above, deleting requirements from GUI doesn’t exactly remove the data from the database. It just gets unaccessible. But to keep the integrity intact, any deleted requirement, still occupies a placeholder in the database.

Soft Delete of Artifacts

Since any “delete” operation via the GUI doesn’t cause the physical deletion of the data from the database, and in spite of this result, the data is still permanently unreachable when deleted, may be we can consider a “soft” delete operation.

It is a widely accepted approach to organize requirements in modules within DNG. Like we create/edit requirements within module, we also tend to execute the delete command in modules. This command is called as “Remove Artifact”:

What we are doing with this command is not actually deleting the requirement, but removing it from the module. So it will remain accessible in the base artifacts and folders.

Also, by design, there is a concept of requirement reusability. This means this requirement may coexist in other modules.

This is why, when removing a requirement from a module it is not “deleted” by default. However if the artifact is present only in one module, then there is an option to delete it via “remove” comment as follows:

If the artifact to be removed doesn’t exist in any other module, this option permanently deletes it.

The permanent deletion of the artifact will still leave the artifact on the database for the integrity of the data. But it will not be possible to retrieve this artifact any more.

So we can recommend not to permanently delete any artifact but remove. However there might still be some issues with removing the artifact from the module:

When an artifact is removed from the module, the specification text, its key-value pair attributes, history, all information will remain. Only its link to the module it belongs to will be broken. But there is one important piece of information else which gets broken. The link to other artifacts! In this example we see that the requirement is linked to another requirement in another module. When the artifact is removed from the module, we use that link as well. So when we retrieve it back to the module, it won’t have the link information anymore:

Sometimes our clients may prefer this behavior, sometimes not. For those, of which this is not preferable, we generally consult a “softer” delete operation. We advise to define a boolean attribute called “Deleted” with default value “false”. This attribute is assigned to all artifact types and instead of deleting the artifact, simply change the boolean value from “false” to “true”.

Of course it is not enough to do this. Also we need to define a filter for every view which filters out the “Delete” attribute “true” valued requirements.

Hard Delete of Artifacts

Hard delete of artifacts can be considered as deleting the artifacts with the option “If the artifact is not in other modules, permanently delete it.” selected.

But this will still not be sufficient if the artifact is being used in more than one module. To make sure a hard delete, first remove it from the modules it is being used in.

You can used the information “in modules”

Then, just simply locate the base artifact in the folder structure and select “Delete Artifact” from the context menu.

Upon confirming the deletion it will be permanently deleted:

This operation will still not delete the artifact from other configurations if there are more than one stream and the artifact appears in those streams.

How to clean up requirements

As mentioned above, the requirements even deleted from GUI are not deleted from the database for various reasons. Most of which is to maintain the data integrity and database indices. Theoretically, after every physical deletion of the artifacts a reindexing and database maintenance scripts should be run. This would not make sense for a daily operation done by an end user.

For those artifacts which are permanently deleted from the GUI, there is a repotools command which helps to remove from database as well. This is deleteJFSResources command. However, use it with extra precaution and please review the information in the link below:

https://www.ibm.com/support/pages/deleting-data-permanently-doors-next-generation-project

Summary:

Making use of configurations, streams and modules complicates the deletion concept. We may loose information where we don’t expect. Also we may get results even after properly deleting artifacts. Since they will still be available in other configurations. That is why we generally advise to use the “Deleted” attribute approach and implement the filtering of non-deleted artifacts.

Installation of IBM (Engineering Lifecycle Management) ELM

This article describes our experience in implementing the ELM (Engineering Lifecycle Management) product from IBM.

Introduction

IBM ELM applications are the leading platform for complex product and software development. ELM extends the standard functionality of ALM products. It provides an integrated end-to-end solution that offers complete transparency and tracking of all technical data, through requirements, testing and deployment. ELM optimizes collaboration and communication between all stakeholders, improving decision-making, productivity, and overall product quality. This product has undergone a significant update and has also changed its name from CLM (Collaborative Lifecycle Management) to ELM (Engineering Lifecycle Management).

The main differences between CLM and ELM relate to the change in product names:

- Rational DOORS – in ELM it is called IBM Engineering Requirements Management DOORS Family (DOORS)

- Rational DOORS Next Generation (DNG) – in ELM it is called IBM Engineering Requirements Management DOORS Next (DOORS Next)

- Rational Team Concert (RTC) – in ELM it is called IBM Engineering Workflow Management (EWM)

- Rational Quality Manager (RQM) – in ELM it is called IBM Engineering Test Management (ETM)

- And many more...

Since version 6.0.6.1, the user interface has already changed. However, some applications have not had their names changed, such as Report Builder, Global Configuration Management, Quality Management, etc.

Other differences between CLM and ELM include the fact, that ELM supports different web application servers, operating systems, and databases. For maximum flexibility in adopting new advanced features and to simplify potential future migration to or from:

- IBM ELM on Cloud SaaS

- IBM ELM as a Managed Service

- IBM ELM containerized on RedHat OpenShift, which is currently under development

The current version that we are installing is 7.0.2 SR1 (ifix15). This product is primarily aimed at customers in the fields of healthcare, military, transportation and many other critical areas of industry and manufacturing. Among the main common requirements in these areas of the industry is speed, reliability, flexibility, and security. For the effective and reliable functioning of the ELM solution, initial analysis of requirements and planning is important.

Challenges

After defining the client's requirements (required applications, architecture), the actual deployment of the ELM product is approached. During the design, we consider the client's requirements, adapt the architecture to the already existing infrastructure with the technical requirements of the ELM product. The ELM product supports implementation on Linux (RedHat), Windows and IBM AIX operating systems.

IBM ELM offers several applications, some of which are listed below:

- JTS (Jazz Team Server)

- CCM (Change and Configuration Management)

- RM (Requirements Management)

- QM (Quality Management)

- ENI/RELM (IBM Engineering Lifecycle Optimization - Engineering Insights)

- AM (IBM Engineering Systems Design Rhapsody – Model Management)

- GC (Global Configuration Management)

- LDX (Link Index Provider)

- Jazz Reporting Service, which includes more applications:

- RS (Report Builder)

- DCC (Data Collection Component)

- LQE (Lifecycle Query Engine)

- JAS (Jazz Authorization Server)

- RPENG (Document Builder) – which is implemented as a separate application/system

For large infrastructures, we recommend implementing each application on a separate server. That, however, significantly increases the financial requirements. For fewer lower requirements on ELM, we look for the best possible balance between performance and reliability, taking into consideration their possible financial constraints to achieve the best results. The optimization of the IBM ELM solution can also consist of the fact, that several applications will be deployed on one server at the same time. The implementation of multiple applications on one server is used for less frequently used applications. Based on our experience, we have verified the following logical arrangement of applications on servers (ASX):

- AS1: JTS, LDX, GC

- AS2: QM, CCM

- AS3: DCC, RPENG

- AS4: LQE, RS, RELM

- AS5: RM

For a better understanding of the architecture, the following figure shows a proposal for a possible logical topology:

Preferred Solutions

The recommendation for large customers is to install each application on a separate server, if the technical and financial limitations of the customer's environment allow it. However, this results in increased costs for the server, which consist of installations, licenses, upgrades, and "resources" necessary for the operation of the given solution.

For small and medium-sized customers, based on an initial analysis, we recommend installing less-used applications on a shared server. However, over time, problems with memory and system resource utilization may occur. With a properly designed architecture, these applications can be partitioned without data loss. For detection, prevention of problems and future planning, the deployment of a monitoring system is reccomended.

From the result of the initial analysis, it is determined which application will be installed separately on the server. It is also advisable to install applications on separate servers that the customer uses regularly and contain thousands to millions of requests. Other, less computing and memory-intensive applications can be redistributed so that they can share computing and memory performance.

An essential part of every ELM deployment is the JTS application, which ensures the connection of individual applications. The recommended deployment of ELM packages in the infrastructure is to use IHS (IBM HTTP Server), which is configured as Reverse Proxy.

A necessary part of the solution is the implementation of the database server. The database server stores most of the data, so it is vital to pay extra attention to it when deploying and choosing a suitable product. The basic databases included in the installation include Apache Derby, which is intended primarily for testing purposes with a maximum of 10 users. This database is not intended for production environments and therefore we do not recommend it. Databases suitable for both production and testing include:

- IBM DB2

- Oracle

- Microsoft SQL Server

If the installation is carried out on the cloud, it is preferred to use the DB2 database. In the case of large companies, the Oracle database is more suitable. The use of these databases directly depends on the number of users and the amount of user data.

The use of these databases directly depends on the number of users and the amount of user data.

After the product is installed, we address the implementation of user authentication and security. For basic security, the WebSphere Liberty basic registry is used, which is available immediately after installation, but this option is recommended for testing purposes only. Other authorization options are, for example, the use of the LDAP protocol, which can be supplemented by the installation of JAS (Jazz Authorization Server). With the LDAPS authorization option, users are authorized using a hierarchical user structure. JAS extends the solution with the OAuth2.0 protocol. This solution also offers to authorize third-party applications.

Specialized solutions, approached and tips

From Softacus' point of view, we try to adapt to customers and carry out installations according to their usual standards. Based on the client's requirements, Softacus can work remotely, in the case of customers from critical infrastructure, we can also work on-site. VPN (Virtual Private Network) technologies are used for safe use.

During installations, it is necessary to ensure that installed IBM ELM applications do not have access to environments they are not authorized for. Attention should also be paid to the installation of applications that are listed as "package" (an application with a set of sub-applications to choose from - the client chooses which sub-applications he needs). After installation and subsequent setting of the environment, it is necessary to verify the functionality of the system, which is assisted by the testing team (Softacus).

One of the last steps after performing the installation and securing the server communication is to think about data backup. With customers, it is necessary to think about a "disaster recovery" plan, and it is also necessary to plan regular data backups to secure data against loss or system failure. Monitoring is an essential part of every infrastructure nowadays. Monitoring helps with prediction and improves response to adverse events on servers and applications.

Conclusion

ELM product implementation includes analysis, planning, deployment, and continuous monitoring. At Softacus, we work on improvement and efficiency during the entire course of the solution. It is a continuous and complicated process, during which it is possible to implement new functionalities and extensions into the customers' environment.

Článok: Installation of IBM ELM

Tento článok popisuje naše skúsenosti pri implementáciách produktu ELM (Engineering Lifecycle Management) od IBM.

Introduction

Aplikácie ELM sú vedúcou platformou pre komplexný vývoj produktov a softvéru. ELM rozširuje štandardné funkcionality ALM produktov. Poskytuje integrované komplexné riešenie, ktoré ponúka úplnú transparentnosť a sledovanie všetkých technických údajov, cez požiadavky, testovanie a nasadenie. ELM optimalizuje spoluprácu a komunikáciu medzi všetkými zainteresovanými stranami, zlepšuje rozhodovanie, produktivitu a celkovú kvalitu produktu. Tento produkt prešiel značnou aktualizáciou a zmenil aj svoj názov z CLM (Collaborative Lifecycle Management) na ELM (Engineering Lifecycle Management).

Hlavné rozdiely medzi CLM a ELM sa týkajú zmeny názvov produktov:

- Rational DOORS – v ELM sa nazýva IBM Engineering Requirements Management DOORS Family (DOORS)

- Rational DOORS Next Generation (DNG) – v ELM na nazýva IBM Engineering Requirements Management DOORS Next (DOORS Next)

- Rational Team Concert (RTC) – v ELM na nazýva IBM Engineering Workflow Management (EWM)

- Rational Quality Manager (RQM) – v ELM na nazýva IBM Engineering Test Management (ETM)

- A mnoho iných...

Od verzie 6.0.6.1 sa už zmenilo aj používateľské rozhranie. Niektorým aplikáciám sa však názvy nemenili, ako napríklad Report Builder, Global Configuration Management, Quality Management, atď.

K ďalším rozdielom medzi CLM a ELM patrí to, že ELM podporuje rôzne webové aplikačné servery, operačné systémy a databázy. Pre maximálnu flexibilitu pri prijímaní nových pokročilých funkcií a na zjenodučenie potenciálneho budúceho presunu na alebo z:

- IBM ELM na Cloud SaaS

- IBM ELM ako Managed Service

- IBM ELM kontajnerizované na RedHat OpenShift, ktoré je v súčasnosti vo vývoji

Aktuálna verzia, ktorú inštalujeme je 7.0.2 SR1 (ifix15). Tento produkt sa zameriava primárne na zákazníkov z oblastí zdravotníctva, armády, dopravy a mnohých ďalších kritických oblastí priemyslu a výroby. Medzi hlavné spoločné požiadavky týchto oblastí priemyslu patria rýchlosť, spoľahlivosť, flexibilita a bezpečnosť. Pre efektívne a spoľahlivé fungovanie ELM riešenie je dôležitá prvotná analýza požiadaviek a plánovanie.

Purpose of the article - challenges

Po definovaní požiadaviek klienta (požadované aplikácie, architektúra) sa pristupuje k samotnému nasadzovaniu produktu ELM. Pri návrhu zohľadňujeme požiadavky klienta, prispôsobujeme architektúru k už existujúcej infraštruktúre s technickými požiadavkami produktu ELM. ELM produkt podporuje implementáciu na operačných systémoch Linux (RedHat), Windows a IBM AIX.

IBM ELM ponúka viacero aplikácií, pričom nižšie sú uvedené niektoré z nich:

- JTS (Jazz Team Server)

- CCM (Change and Configuration Management)

- RM (Requirements Management)

- QM (Quality Management)

- ENI/RELM (IBM Engineering Lifecycle Optimization - Engineering Insights)

- AM (IBM Engineering Systems Design Rhapsody – Model Management)

- GC (Global Configuration Management)

- LDX (Link Index Provider)

- Jazz Reporting Service, obsahujúci viacero aplikácií:

- RS (Report Builder)

- DCC (Data Collection Component)

- LQE (Lifecycle Query Engine)

- JAS (Jazz Authorization Server)

- RPENG (Document Builder) – ktorý je implementovaný ako samostatná inštalácia

Pri veľkých infraštruktúrach odporúčame implementovať každú aplikáciu na samostatný server. To ale značne zvyšuje finančné požiadavky. Pre menej nižších požiadaviek na ELM hľadáme čo najvýhodnejší balans medzi výkonom a spoľahlivosťou za účelom dosiahnutia čo najlepších výsledkov vzhľadom k ich možným finančným obmedzeniam. Optimalizácia IBM ELM riešenia môže spočívať aj v tom, že na jednom serveri budú nasadené viaceré aplikácie naraz. Implementácia viacerých aplikácií na jednom serveri sa využíva pri menej používaných aplikáciách. Na základe našich skúseností sa nám overilo takéto logické usporiadanie aplikácií na serveroch (ASX):

- AS1: JTS, LDX, GC

- AS2: QM, CCM

- AS3: DCC, RPENG

- AS4: LQE, RS, RELM

- AS5: RM

Pre lepšie pochopenie architektúry uvádza nasledujúci obrázok návrh možnej logickej topológie:

Preferred solutions

Odporúčaním pre veľkých zákazníkov je inštalácia každej aplikácie na samostatný server, v prípade, že im to dovoľujú technické a finančné obmedzenia prostredia u zákazníka. Z toho ale vyplývajú zvýšené serverové náklady, ktoré pozostávajú z inštalácií, licencií, upgradov a „resources“ potrebných na prevádzku daného riešenia.

U malých a stredne veľkých zákazníkov na základe úvodnej analýzy odporúčame inštalovať menej používané aplikácie na zdieľaný server. Časom ale môže dôjsť k vzniku problémov s pamäťou a vyťaženosťou systémových zdrojov. Pri správne navrhnutej architektúre je možné tieto aplikácie rozdeliť bez straty dát. Pre detekciu, prevenciu problémov a plánovanie do budúcna je odporúčané nasadiť monitorovací systém.

Z výsledku prvotnej analýzy sa určuje, ktorá aplikácia bude inštalovaná samostatne na serveri. Na samostatné servery je tiež vhodné inštalovať tie aplikácie, ktoré zákazník využíva pravidelne a obsahujú obrovské množstvá požiadaviek. Ostatné, menej výpočtovo a pamäťovo náročné aplikácie je možné prerozdeliť tak, aby vedeli zdieľať výpočtový a pamäťový výkon.

Nevyhnutnou časťou každého ELM nasadenia je aplikácia JTS, ktorá zabezpečuje prepojenie jednotlivých aplikácií. Odporúčaným nasadením ELM balíkov do infraštruktúry je použiť IHS (IBM HTTP Server), ktorý je nakonfigurovaný ako Reverse Proxy.

Nutnou súčasťou riešenia je implementácia databázového servera. Databázový server uchováva väčšinu dát, preto je potrebné mu venovať zvýšenú pozornosť pri nasadzovaní a výbere vhodného produktu. Medzi základné databázy pri inštalácií patrí Apache Derby, ktorý je určená primárne na testovacie účely s obmedzením na maximálne 10 používateľov. Táto databáza nie je určená pre produkčné prostredia, a preto ju neodporúčame. Medzi databázy vhodné pre produkciu aj testovanie patria:

- IBM DB2

- Oracle

- Microsoft SQL Server

Ak je inštalácia realizovaná cez cloud, preferované použitie je DB2 databázy. V prípade veľkých firiem je preferované použiť Oracle databázu. Použitie týchto databáz sa priamo odvíja od počtu používateľov a od množstva používateľských dát.

Po nainštalovaní produktu sa venujeme implementácií overovania používateľov a zabezpečenia. Pre základné zabezpečenie sa používa WebSphere Liberty basic registry, ktorý je dostupný hneď po inštalácií, avšak táto možnosť sa odporúča iba na testovacie účely. Ďalšie možnosti autorizácie sú napríklad využitie protokolu LDAP, ktorý môže byť doplnený o inštaláciu JAS (Jazz Authorization Server). Pri možnosti autorizácie pomocou LDAPS sa používatelia autorizujú pomocou hierarchickej štruktúry používateľov. JAS rozširuje riešenie o OAuth2.0 protokol. Toto riešenie ponúka aj možnosť autorizácie aplikácie tretích strán.

Specialized solutions, approached and tips

Z pohľadu Softacusu sa snažíme prispôsobiť sa potrebám zákazníkom a vykonávať inštalácie v súlade s ich zaužívanými štandardmi. Na základe požiadaviek klienta vieme v Softacuse pracovať na diaľku, no v prípade zákazníkov z kritickej infraštruktúry vieme pracovať aj na mieste. Pre bezpečné používanie sa využívajú technológie VPN (Virtual Private Network).

Pri inštalácií je potrebné zabezpečiť, aby inštalované IBM ELM aplikácie nemali prístup na prostredia, ku ktorým nemajú autorizáciu. Pozor taktiež treba dávať na inštaláciu aplikácií, ktoré sú uvedené, ako “package“ (aplikácia, pri ktorej je na výber sada podaplikácií – klient si zvolí, ktoré podaplikácie potrebuje). Po inštalácií a následnom nastavení prostredia je potrebné overiť funkčnosť systému, pri čom asistuje testovací tím (Softacus).

Jedným z posledných krokov po vykonaní inštalácie a zabezpečení serverovej komunikácie je zvážiť zálohovanie dát. Pri zákazníkoch je potrebné sa zamýšľať nad „disaster recovery“ plánom (vysporiadaním sa s potenciálnymi nehodami) a taktiež je potrebné naplánovať pravidelné zálohovanie dát z dôvodu zabezpečenia dát pred stratou alebo zlyhaním systému. Nevyhnutnou časťou každej infraštruktúry v tejto dobe je už aj monitoring. Monitoring pomáha s predikciou a zlepšuje reakciu na nežiadúce udalosti na serveroch a aplikáciách.

Conclusion

Implementácia ELM produktu obsahuje analýzu, plánovanie, nasadzovanie a neustály monitoring. V Softacuse pracujeme na zlepšovaní a zefektívňovaní počas celého priebehu riešenia. Je to súvislý a komplikovaný proces, počas ktorého je možné implementovať nové funkcionality a rozšírenia do prostredia zákazníkov.

Introduction

Configuration Management has always been a difficult problem to solve, and it tended to get more and more difficult as the complexity of the products increased. As end-user expectations evolve, development organizations require more custom and tailored solutions. This introduces a necessity of effective management of versions and variants, which introduces more sophistication to the problem. The solution to being able to manage a complex product development lifecycle in this sense also requires a solution in a similar sophistication level.

The complexity of the end-products and being able to be tailored for the end user are not the only concerns for system developers. What about increasing competitive pressures, efficiency, etc.? These also have increasing importance when considering developing a system.

Challenges in Product Development

Of course, when we talk about developing a system, we should think about how companies will be able to develop multiple products, share common components, and manage variants, product lines, and even product families, especially if this system needs to be able to answer many different types of user profiles, expectations, needs, and so on.

One main concern for such companies is how to optimize the reuse of assets across such product lines or product families for effective and efficient development processes. Such reusability may introduce new risks without being governed by effective tools and technologies. For instance, a change to a component in one variant or one release cycle, may not be propagated correctly, completely, and efficiently to all other recipient versions and variants.

This may result in redundancy, error-prone manual work, and conflicts. We do not want to end up in this situation while seeking solutions for managing product lines, families, versions, and variants. In this article, we would like to provide a brief explanation of how IBM Engineering Lifecycle (ELM) Solution tackles such challenges by enabling a cross domain configuration management solution across requirements, system design, verification and validation, and all the way down to software source code management.

This solution, Global Configuration Management (GCM), is an optional application to the ELM Solution, which integrates several products to provide a complete set of applications for software or systems development.

The Solution

Global Configuration Management (GCM) offers the ability to reuse engineering artifacts from different system components with different lifecycle statuses across multiple parts of the system. Stakeholder, system, sub-system, safety, software, hardware requirements, verification and validation scenarios, test cases, test plans, system architectural design, simulation models, etc. can be reused as well as configured from a single source of truth for different versions and variants. Each of these information sources above has their own configuration identification as per their domain and lifecycle. One main challenge is to combine all these islands of information into one big configuration.

Fig1

In the example above, there are 3 global configurations:

- A configuration which was sent to manufacturing, the initial phase of the ACC (Adaptive Cruise Control)

- A configuration where the identified issues are being addressed (mainly to fix some software bugs)

- And a configuration on which engineering teams are currently working on to release the next functionally enriched revision.

All these configurations include a certain set of requirements; test cases relevant to those requirements which will be used to verify the requirements; a system architecture parts which satisfies the requirements and will be used during the verification process; and if the system also includes any software the relevant source code to be implemented.

Of course, the sketch above can be extended to a full configuration of an end product, for instance a complete stand alone system like an automobile. In this case, we define a component hierarchy to represent the car. For a reference on component structures for global configurations the article from IBM here can be used.

In such applications of GCM a combination of components of the system and streams can be used to as follows to define the whole system:

Fig 2: Image from article: "CLM configuration management: Defining your component strategy" - https://jazz.net/library/article/90573

In such examples, the streams represented as right arrows on an orange background (global stream: ) correspond to a body part of the system and is constituted by several configurations of relevant domains. For instance, an “air pressure sensor” is defined by a set of requirements for this part, as well as a system design for the sensor and its test cases to verify the requirements. All these domain level, local configurations correspond to different information assets. The global configuration can be defined in such a way that if a different configuration is defined for Ignition System ECU (different baseline is selected for Ignition System ECU Requirements, Test Plans and Cases and Firmware) we end up with a different variant of the system. This effect is illustrated in the figure below:

Fig 3: Developing 3 different variants at the same time, facilitating reusability.

We may have a base product and different variants being developed to this base product. The variants may share considerable commonality, and they are based on a common platform. This platform is considered as a collection of engineering assets like requirements, designs, embedded software, test plans and test cases etc.

Effective reuse of the platform engineering assets is achieved through the Global Configuration Management application. Via GCM, ELM can efficiently manage sharing of engineering artifacts and facilitate effective variant management. While a standard variant (or we can call it also as Core Platform) is being developed (see Fig 3), some artifacts constituting specifications, test cases, system models and source code for sub systems, components or sub-components can be directly shared and reused, while others may undergo small changes to make a big difference. Sometimes there might be a fix that surfaces in one variant and should be propagated through other variants as well because we may not know if that variant is affected by this issue or not. Such fixes can easily and effectively be propagated through all variants making sure that the issue is addressed on those variants as well. This is because all artifacts regardless of being reused or changed, are based on one single source of truth and share the same basis. This makes comparison of configurations (variants) very easy and just one click away.

ELM with GCM manages strategic reusability by facilitating parallel development of the “Standard Variant” (Core Platform) and allows easy and effective configuration comparison and change propagation across variants.

Tips and Best Practices for GCM

The technology and implementation behind GCM are very enchanting. Talking about developing systems, especially for safety-critical systems, a two-way linking of information is required. Information here is any engineering artifact, from requirements to tests, reviews, work items, system architecture, and code changes.

So one should maintain a link from source to target and also the backward traceability should be satisfied from target to source. How is this possible with variants? There must be an issue here, especially when talking about traceability between different domains, say between requirements and tests.

To be more specific, let's look at a simple example:

In this example, we have two variants. The initial variant is made of one requirement and a test case linked to that requirement. Namely, "The system shall do this," and the test case is specifying how to verify this. The link between the requirement and the test case is valid (green connection). Now, in the second variant, we have to update the requirement. Because the system shall do this under a certain condition. Both requirements have the same id. They are two different versions of the same artifact. We can compare these configurations and see how the specification has changed from version 1 to version 2. But now, what will happen to the link? Is it still valid? Is the test case really testing the second version of the requirement? We have to check. That is why the link is not valid anymore in the second variant.

The team working on the first variant sees that the test case exactly verifies version 1 of the requirement. But the team working on the second variant is not sure. There is a suspect between the requirement and the test case because the requirement has changed. How is this possible with bidirectional links? Actually, it is not. Because it is supposed to be the same link as well, like it is the same version of the requirement. So we also kind of version control the links. However, if we anchor both sides of the links to the different artifacts on different domains, creating two instances for the same link is not possible. ELM enables this by deleting the reverse direction of links when Global Configuration is enabled and maintains the backward links via indices. This is the beauty of this design. Cause links from source to target exist but, looking backwards, are maintained by link indices, which makes the solution very flexible for defining variants and having different instances of links between artifacts among different variants.

There are some considerations to make when enabling Global Configurations in projects. The main question I ask to decide whether to enable it or not is, do I need to manage variants? How many variants? Is reusability required? Answers to these questions will shed light on the topic without any second thoughts.

Then what is a component, how granular should it be, how to decide on streams and configurations. What domains should be included in GC, when to baseline, definition of a taxonomy for system components. Would also be typical questions on designating the global configuration environment. The discussion of this topic requires a separate article to focus on. Let's leave that for next time, then.

Conclusion

IBM ELM’s Global Configuration Management (GCM) is a very specific solution for Version and Variant Management and is designed to enable Product Line Engineering, by facilitating effective and strategic reuse.

With GCM implemented, the difficulties around sharing changes among different variants disappear, and every change can be easily and effectively propagated among different product variants.

GCM mainly helps organizations reduce time to market and cost by reducing error prone manual and redundant activities.

It also improves product quality by automating the change management process which can be used by multiple product development teams and multiple product variants.

References

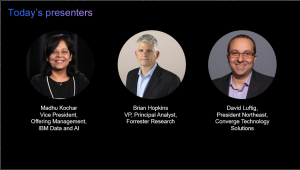

With emerging technologies in Edge, Digital Twin, Asset Performance Management (APM) and AI/ML, how can your organization justify their business value and make the case for investment? What are the new measures and KPIs your organization should be tracking to reinforce the justification of these emerging technologies?

Join us to learn how we can help you make the business case for 21st Century maintenance and reliability.

Tom Woginrich is a Principal Value Consultant in the IBM Sustainability Software division. He is a subject matter expert in Asset Management best practices and has worked with many companies to transform business processes and implement technology improvements. Mr. Woginrich has over 30 years of Asset Management experience leading and working in large enterprises. He has a broad range of industry experience that includes Pulp & Paper, Energy & Utilities, Chemicals & Petroleum, Automotive, Oil & Gas, Life Sciences/Pharmaceuticals, Facilities, and Semiconductor Manufacturing.

His working experience and knowledge in operations, field services and maintenance give him a very broad comprehension of the total asset management lifecycle and the value opportunities contained within. It is this background, together with his experience managing and deploying technology, that helps him facilitate the business value case conversation that should accompany technology implementations.

Location

Leif Davidsen - Program Director, Product Management, Cloud Pak for Integration

Leif Davidsen is the Product Management Program Director for IBM Cloud Pak for Integration. Leif has worked at IBM's Hursley Lab since 1989, having joined with a degree in Computer Science from the University of London.

Andy Garratt - Technical Product Manager, IBM Cloud Pak for Integration

Andy Garratt is an Offering Manager for IBM’s Cloud Pak for Integration, based in IBM’s Hursley labs in the UK. He has over 25 years’ experience in architecting, designing, buildingand implementing integration solutions for customers around Europe and around the world and loves to meet users, IBMers, partners and customers to find out about all the great things they’re doing with IBM integration products.

Over the last few months, we’ve seen the role of AI grow from an important technology tool to truly a force for good. From automating answers to symptom and other citizens questions to finding insights in public data, AI has helped people deal with an unprecedented challenge at a scale never before seen.

But as organizations and individuals emerge from this pandemic, we see the role of AI continuing to be critical – not only to respond to what’s happening today, but to plan for an uncertain future.

Join our panel of AI experts and business leaders as they share key learnings from the past few months and what organizations can do to prepare for and manage future changes with AI.

More information here

This is session 7 of 7 that covers the IBM ELM tool suite.

IBM Engineering Lifecycle Management (ELM) is the leading platform for today’s complex product and software development. ELM extends the functionality of standard ALM tools, providing an integrated, end-to-end solution that offers full transparency and traceability across all engineering data. From requirements through testing and deployment, ELM optimizes collaboration and communication across all stakeholders, improving decision- making, productivity and overall product quality.

Presented by: Jim Herron of Island Training.